|

12/17/2023 0 Comments Visualize sklearn decision tree

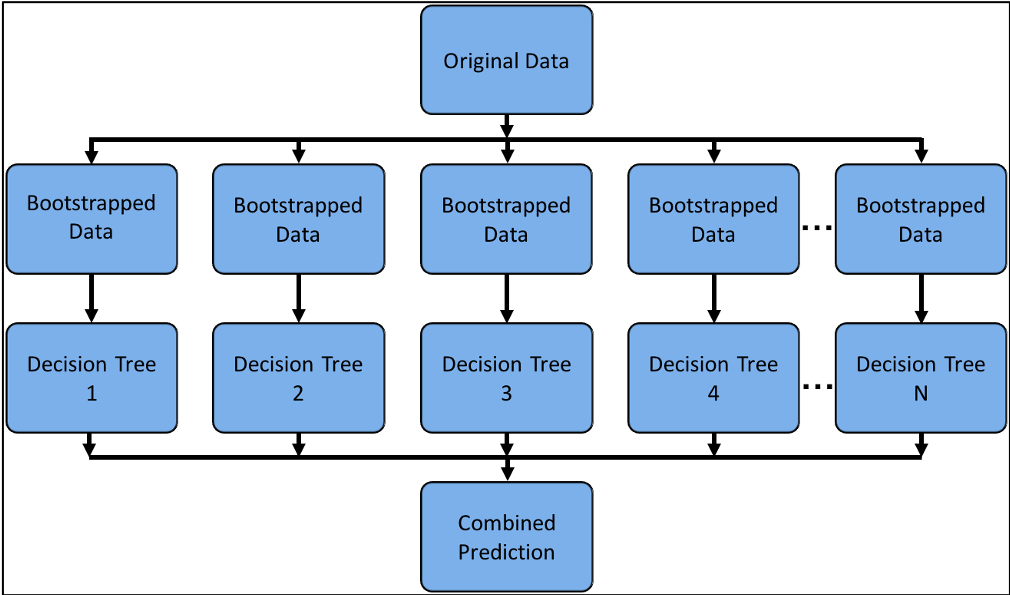

This problem is mitigated by using decision trees within an Such as pruning, setting the minimum number of samples requiredĪt a leaf node or setting the maximum depth of the tree areĭecision trees can be unstable because small variations in theĭata might result in a completely different tree being generated. The disadvantages of decision trees include:ĭecision-tree learners can create over-complex trees that do not The true model from which the data were generated. Performs well even if its assumptions are somewhat violated by

Possible to account for the reliability of the model. Possible to validate a model using statistical tests. Network), results may be more difficult to interpret. The explanation for the condition is easily explained by boolean logic.īy contrast, in a black box model (e.g., in an artificial neural If a given situation is observable in a model, Techniques are usually specialized in analyzing datasets that have only one type Implementation does not support categorical variables for now. Number of data points used to train the tree.Īble to handle both numerical and categorical data.

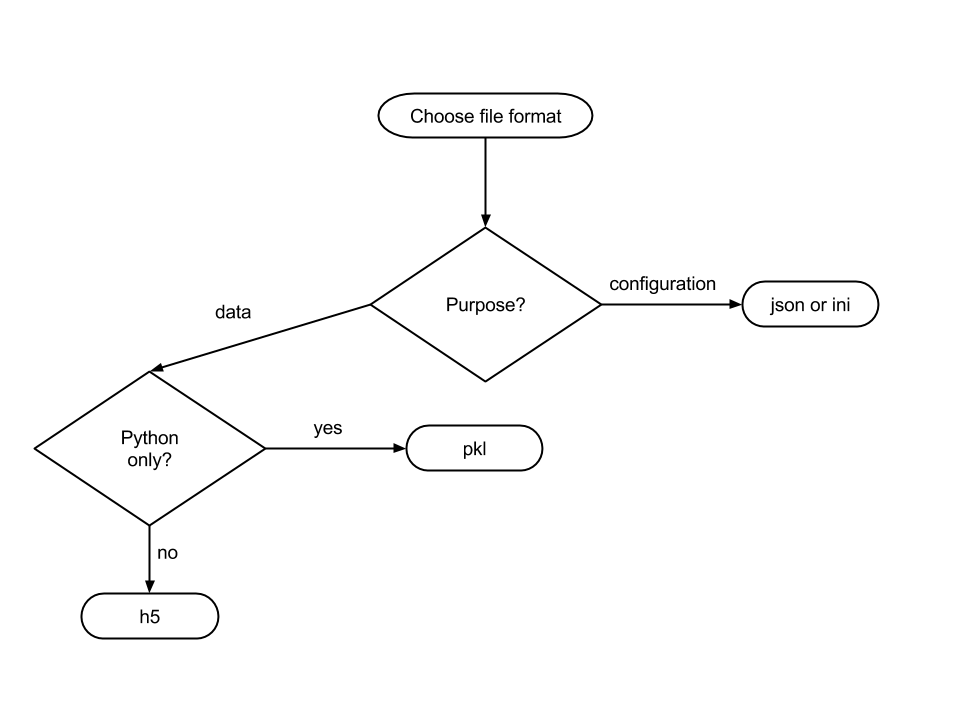

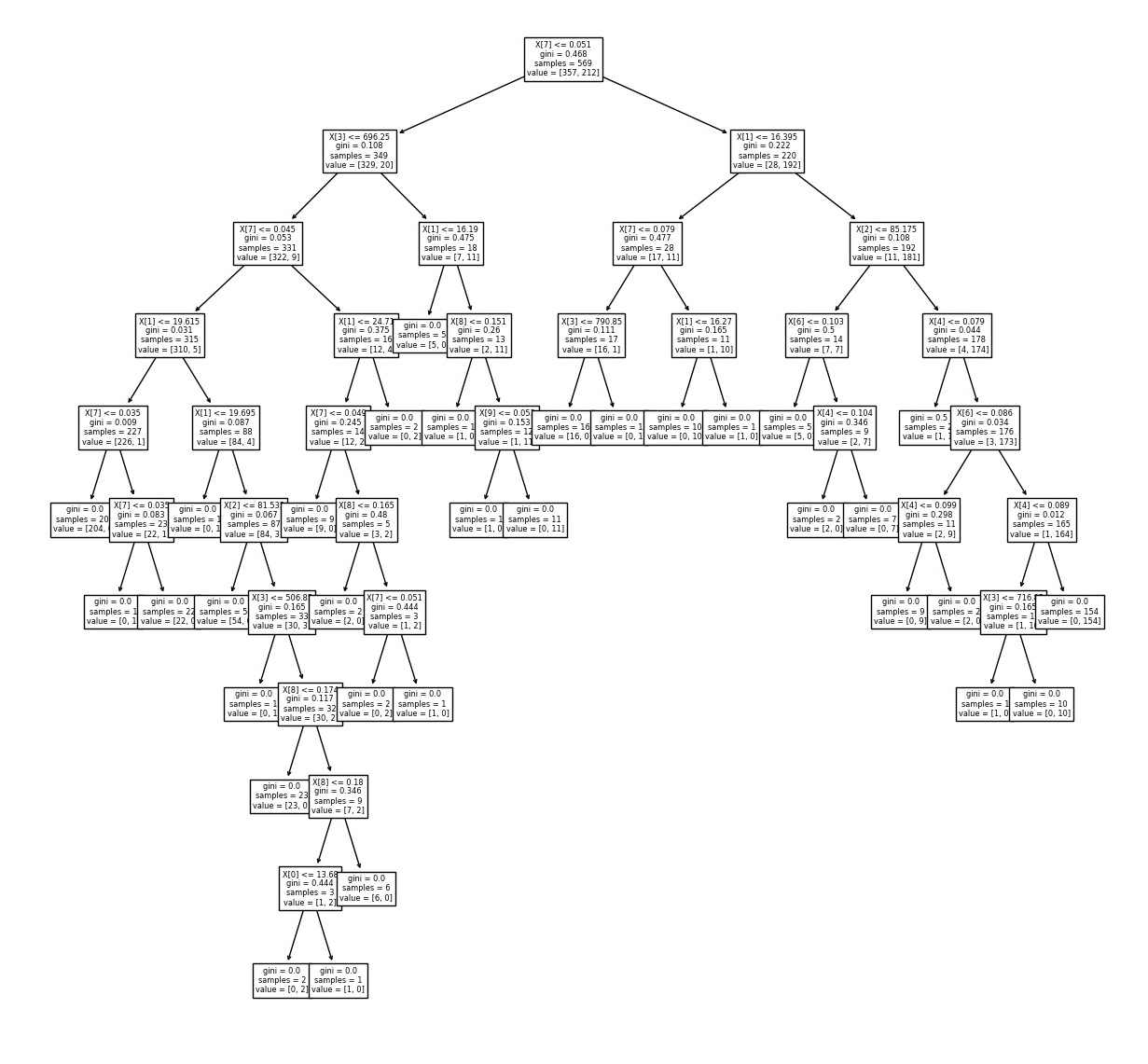

The cost of using the tree (i.e., predicting data) is logarithmic in the Some tree and algorithm combinations support Normalization, dummy variables need to be created and blank values toīe removed. In this post, you learned about how to create a visualization diagram of decision tree using two different techniques ( ee plot_tree method) and GraphViz method.Simple to understand and to interpret. Decision tree visualization using Graphviz (Max depth = 3) Decision tree visualization using Graphviz (Max depth = 4)Ĭhange the max_depth of the tree as 3 and this is how the tree will look like. The left child node results in the pure data set belonging to Versicolor class with Gini impurity as 0.įig 2. Right child node is split further into two child nodes.Left child node can be said as a pure or homogenous node as it has all the data points belonging to Setosa class.Root node splits the training dataset (105) into two child nodes with 35 and 70 data points.Note some of the following in the tree drawn below:

Note the difference between the tree visualization created using GraphViz (fig 2) and without using GraphViz (fig 1). Here is how the tree visualization looks like. Graph.write_png('/Users/apple/Downloads/tree.png') PyDotPlus converts dot data files into a decision tree image file.įrom pydotplus import graph_from_dot_dataĭot_data = export_graphviz(clf_tree, filled=True, rounded=True, Here are the set of libraries such as GraphViz, PyDotPlus which you may need to install (in order) prior to creating the visualization. In this section, you will learn about how to create a nicer visualization using GraphViz library. Decision tree visualization using ee plot_tree method GraphViz for Decision Tree Visualization Here is how the decision tree would look like: Fig 1. # Train the model using DecisionTree classifierĬlf_tree = DecisionTreeClassifier(criterion='gini', max_depth=4, random_state=1) X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3, random_state=1, stratify=y) From sklearn.model_selection import train_test_splitįrom ee import DecisionTreeClassifier

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed